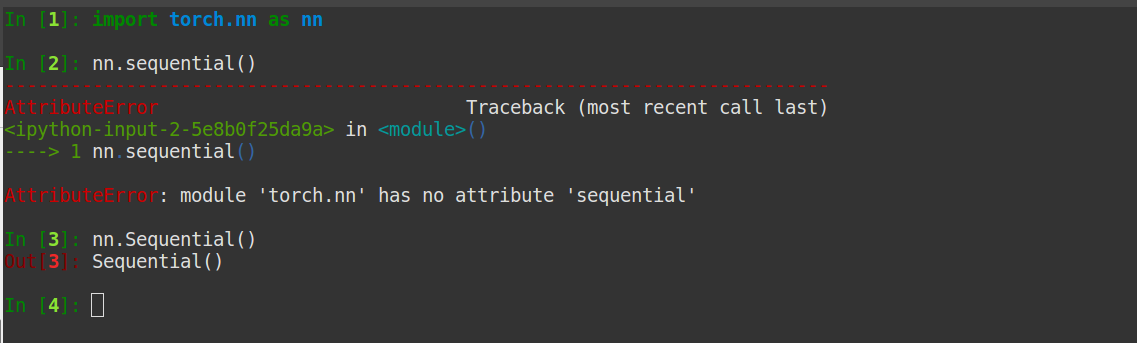

It consist of 10 layers? it will run these 10, it’s a single one? no problem. They return a nn.Sequential because they can run it without the functions knows “what’s inside” So in the code you are pointing to, they build different ResNet architectures with the same function. It makes the forward to be readable and compact. So nn.Sequential is a construction which is used when you want to run certain layers sequentially. # our inputīut using a nn.Sequential the same code can be called in a single line So this two blocks of code are equivalent: x =. Secondly, nn.Sequential runs the three layers at once, this is, it takes the input, run layer1, take output1 and feed layer2 with it, take output2 and feed layer3 giving as result output3. classifier nn.Sequential(nn.Linear(2048, 512), nn.ReLU(). I will be using Dog vs Cat dataset from kaggle. That is way there exist a list-like layer which is a nn.Module. Throughout this tutorial, I will use google colab to write and test the code. First of all, python lists are not registered in a nn.Module which will lead to issues. Layers in this snippet is a standard python list. Model.load_state_dict(model_zoo.load_url(model_urls)) Pretrained (bool): If True, returns a model pre-trained on ImageNet Layers.append(block(self.inplanes, planes))ĭef resnet18(pretrained=False, **kwargs): Layers.append(block(self.inplanes, planes, stride, downsample)) Nn.BatchNorm2d(planes * block.expansion), Kernel_size=1, stride=stride, bias=False), Nn.Conv2d(self.inplanes, planes * block.expansion,

If stride != 1 or self.inplanes != planes * block.expansion: #self.inplanes为上个box_block的输出channel,planes为当前box_block块的输入channel n))ĭef _make_layer(self, block, planes, blocks, stride=1): N = m.kernel_size * m.kernel_size * m.out_channels Self.fc = nn.Linear(512 * block.expansion, num_classes) Self.layer4 = self._make_layer(block, 512, layers, stride=2) Self.layer3 = self._make_layer(block, 256, layers, stride=2) Self.layer2 = self._make_layer(block, 128, layers, stride=2) Self.layer1 = self._make_layer(block, 64, layers) Self.maxpool = nn.MaxPool2d(kernel_size=3, stride=2, padding=1) nv1 = conv3x3(inplanes, planes, stride)ĭef _init_(self, block, layers, num_classes=10):

Return nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride,ĭef _init_(self, inplanes, planes, stride=1, downsample=None): 定义resnet18ĭef conv3x3(in_planes, out_planes, stride=1): If this is the case, why not use return layers (I don’t know whether this is feasible)directly. I can’t understand the code,and I didn’t find a specific explanation in the reference books :ĭoes it mean that the last returned value is (layers),For example, if layers =, by executing this line of code, the final return value is still. I have a simple question about Resnet-18.(but I really don’t understand) The following is the network structure code of Resnet-18.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed